Being-Dex: Breaking through the "last centimeter" for the practical application of dexterous hand operations, taking only 30 minutes

Dexterous hand operation models have long been trapped in a "data-hardware" deadlock: scarce real machine data, lack of cross-embodiment universality, and hardware embodiments not yet converged. To break through this bottleneck, the industry has formed a consensus path of "pre-trained base + post-training adaptation". For example, NVIDIA's GR00T undergoes pre-training on a large amount of diverse real machine data and synthetic data;

As the first VLA pre-trained on large-scale human operation videos, Being-H enables the model to possess human dexterous operation priors through millions of first-person human hand videos.

The core value of these schemas lies in the fact that during the pre-training phase, they acquire generalizable visual-language-action prior knowledge, enabling the model to have the potential to adapt to various task scenarios.

However, pre-training data cannot cover all task scenarios and robots. In real-world scenarios, the base model can only output generally correct plans and actions, which are insufficient to truly complete the tasks. The "last centimeter" challenge still needs to be addressed through post-training.

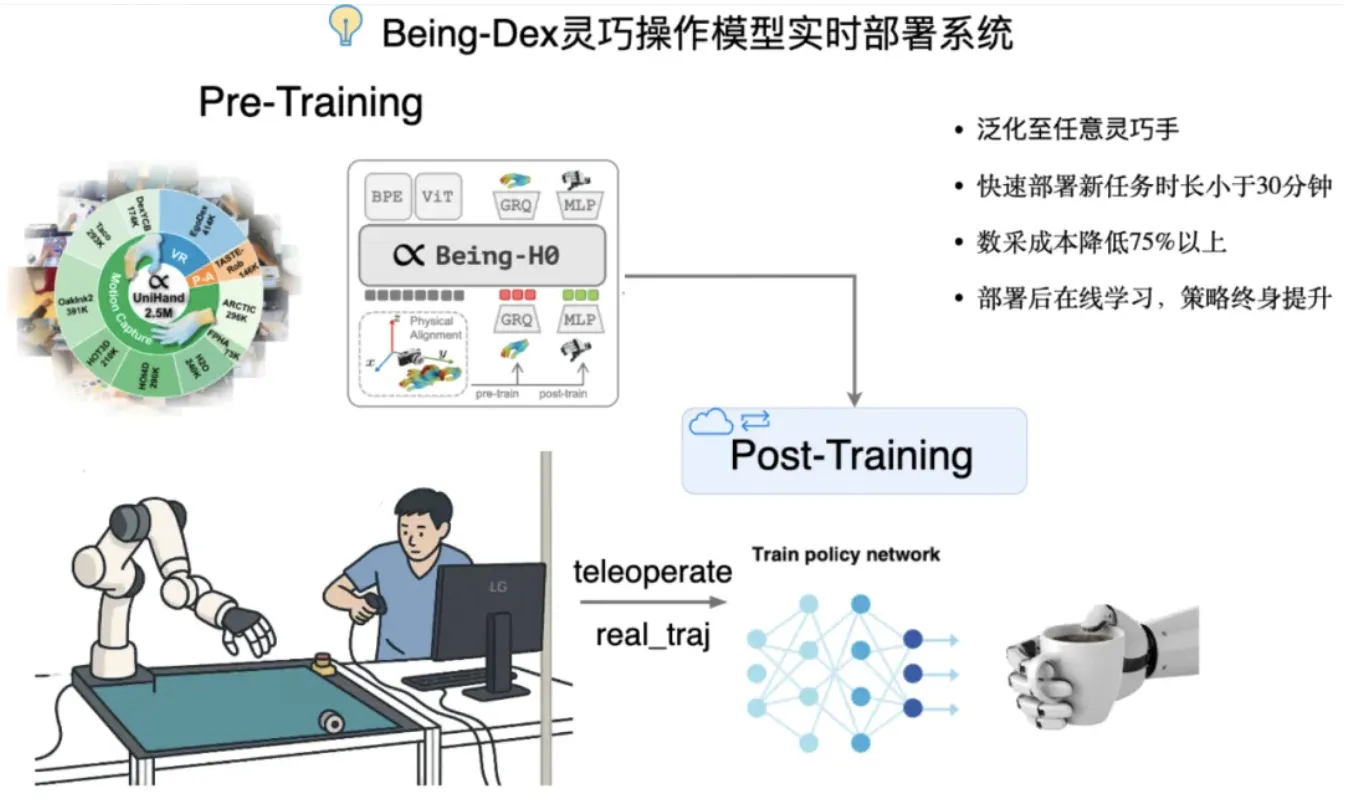

Against this background, BeingBeyond has proposed a brand-new solution: Being-Dex, a new pre-training and post-training integration framework, which enables the base model to learn new tasks on a real robot in 30 minutes.

Training a general humanoid robot large model using internet videos

The post-training phase of Being-Dex starts with the Being-H pre-trained base. Through a closed-loop system of "post-training data collection - model online learning - real-time deployment verification", it achieves the entire process of a new task from data collection to autonomous completion within 30 minutes. It enables online learning and rapid deployment for unknown scenarios, unknown tasks, and unknown objects with a success rate of over 90%, enabling the rapid evolution of embodied foundation models on real robots.

Compared with the traditional VLA paradigm of data acquisition - data cleaning - offline post-training, Being-Dex has significantly improved learning efficiency and task success rate.

The significant improvement in efficiency stems from the Being-Dex closed-loop architecture: through the collaborative mechanism of "pre-training to lay the foundation and post-training for rapid iteration", it achieves breakthrough advantages in three dimensions: continuous evolution capability, hardware adaptability, and scenario and task adaptability.

Continuous evolution ability: a qualitative change from "learning from experience" to "self-iteration"Being-Dex

It is worth mentioning that during the teleoperation process, humans only collect successful data for completing tasks, and the post-training based on Being-H enables the strategy to generalize to any arrangement, combination, and position of objects within the visually covered area after convergence.

This evolutionary capability has significantly reduced the cost for robots to learn new skills—shortening the process from data collection and training iterations that took more than a day to just 30 minutes, and reducing the amount of training data to dozens of pieces, achieving an intelligent leap of "learning faster and faster".

Hardware compatibility: The pre-trained base is backward compatible, and the post-training is dynamically adaptable

In the pre-training phase (Being-H base), it learns from human hand videos. Therefore, its configuration can be mapped to the robot body, covering most mainstream hardware platforms on the market, adapting to dexterous hands of any shape, size, and number of fingers, and solving the problem of data universality.

Dual Adaptation of Scenarios and Tasks: Seamless Expansion from Basic Skills to Specialized Abilities

The "general language-to-action mappings" (such as basic operations like grasping, pushing, pulling, rotating, etc.) mastered by the pre-trained base enable the model to have preliminary cross-scenario capabilities.

The post-training phase achieves the leap from a general-purpose base to specialized skills through scenario-based data collection and task-oriented training. For example, in precision assembly scenarios, the model will specifically enhance the accuracy of 6D pose estimation; when gently grasping irregular objects, it will optimize the gripping point strategy. This dual adaptation capability enables robots to quickly adapt to diverse scenarios such as industrial assembly and home services, achieving "plug-and-play" generalized deployment.

About Zhi Zai Wu Jie:

The Zhizai Wujie team has pioneered a paradigm for large-scale training of general-purpose humanoid robots using internet videos, establishing a full-stack technical closed loop from multimodal perception and posture generation (Being-M series), hand movement generation (MEgoHand), to real-machine control of humanoid robots and dexterous hands. The team has built the world's largest ten-million-level human posture database and the first-person hand dataset, which are becoming core infrastructure driving the development of the industry. The company focuses on the research and development of general humanoid robot models, committed to endowing robots with human-level operation and movement skills through internet video data and multimodal large models, and providing highly generalized technical solutions for robot ontology manufacturers and customers in application scenarios.