BeingBeyond New Breakthrough: BumbleBee - Universal Humanoid Robot Full-Body Control Paradigm

Recently, the BumbleBee team from Zhizai Wujie has proposed a complete training process for full-body control of humanoid robots, namely "Foundation - Clustering - Iteration - Distillation". This process takes into account both the diversity of movements and real-world adaptability of humanoid robots, providing a new training paradigm for general and agile humanoid robot control.

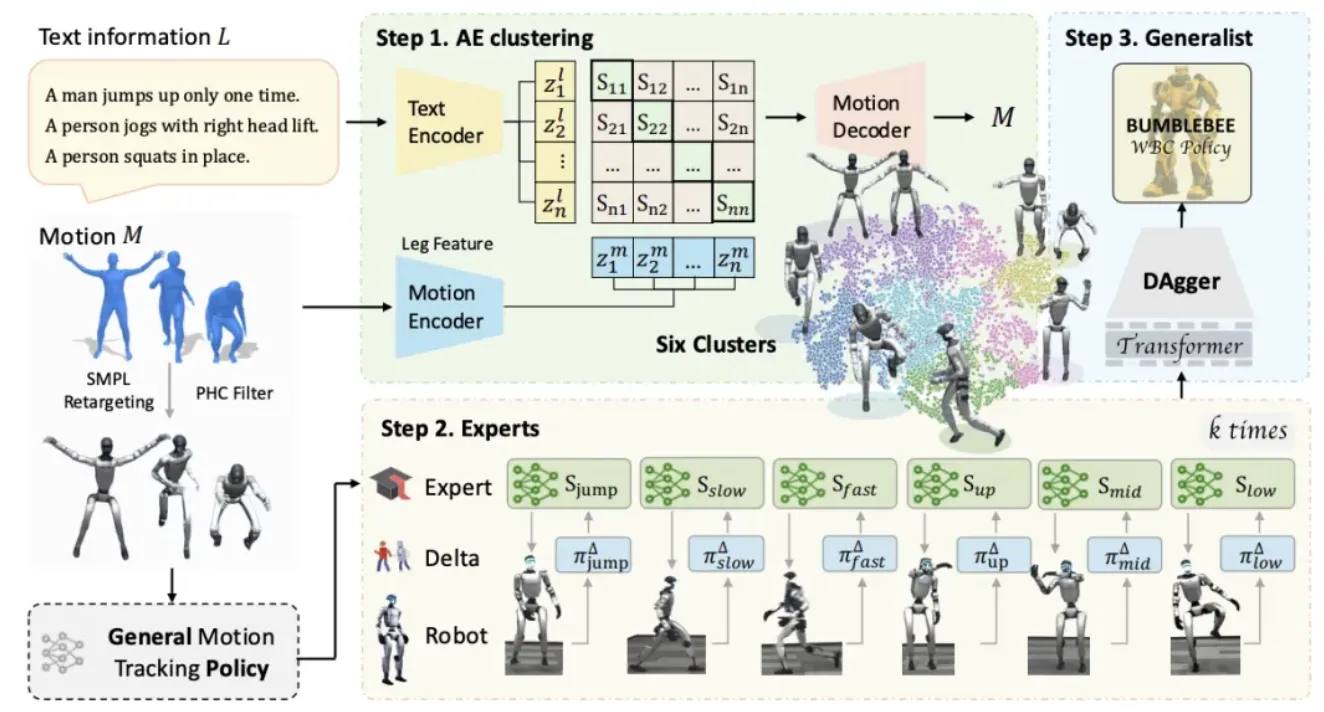

First, this study uses the AMASS dataset to train a basic full-body control model. On this basis, it distinguishes different types of actions through clustering and trains corresponding expert control models respectively. Subsequently, these expert models are deployed on real robots to collect execution trajectories. Based on the collected trajectory sequences, an action incremental model (delta model) corresponding to each expert model is trained to alleviate the sim-to-real gap. Finally, knowledge distillation is used to integrate the fine-tuned expert models into a more robust and generalizable universal control model.

Innovative points of the paper

Expert-Generalist Training Paradigm: Different from the method of directly training a single general strategy, BumbleBee first clusters based on action semantics and dynamic features, trains expert control strategies respectively, and then distills expert knowledge into a general strategy, thereby effectively alleviating conflicts between cross-task.

Multimodal self-supervised clustering: By combining motion autoencoders with text semantic alignment, and introducing explicit leg features such as foot contact and speed, different motion types such as "jumping, slow walking, and in-place upper limb movements" can be distinguished in the latent space.

Clustering-based simulation-reality compensation: Extending the action increment method on the basis of the ASAP framework, and training an incremental model separately for each action cluster, which can more effectively eliminate the simulation-reality deviation caused by category differences compared with a unified general incremental model.

AE Clustering — Process 8179 high-quality motion trajectories from AMASS that have been filtered by PHC. A Transformer is used to encode motion sequences, converting SMPL joint axis angles and root coordinates into 3D joint positions, and redundant nodes are removed. Meanwhile, leg relative velocities and ground contact signals are introduced to enhance dynamic representation. In parallel, BERT is utilized to encode text descriptions in the HumanML3D dataset, achieving alignment between motion and text representations. Finally, clustering is completed in the representation space based on motion encodings.

Expert Learning —— First, train a basic control strategy on the entire dataset as the initial point for the expert models. Subsequently, fine-tune separately on each action cluster based on the clustering results to obtain more targeted expert models. Then, deploy the expert models to real robots for execution to collect trajectories, and train action incremental models for each category individually based on these trajectories. After that, freeze the incremental models to fine-tune the experts, achieving compensation for the deviation between simulation and reality. Through iterative updates, the expert model gradually improves its performance in the cycle of "better strategy — higher-quality data — more accurate increment — re-optimized expert".

Generalist Distillation —— After the convergence of the expert model and the action-incremental model, the fusion phase begins. Based on the DAgger framework, expert models of multiple categories are distilled simultaneously, and the data distribution is adjusted during training to maintain balance among categories and avoid bias. In terms of model structure, Transformer is adopted as the backbone network of the general controller to enhance temporal modeling capabilities. The resulting general strategy achieves a good balance between agility and robustness, and demonstrates better performance than a single expert model or a general model trained directly.

Experimental verification

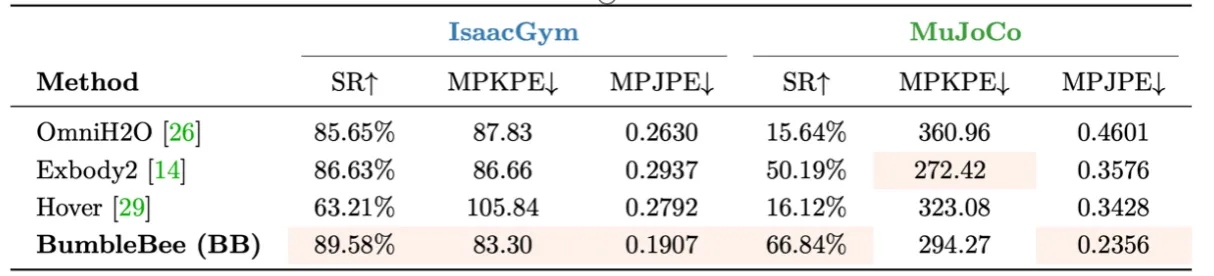

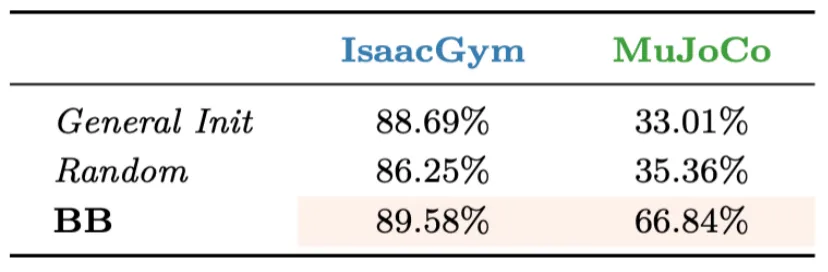

1.Comparison with the baseline.:

On the MuJoCo platform, which is closer to real dynamics, BumbleBee achieves a success rate of 66.84%, significantly higher than Exbody2 (50.19%), while all other baselines are below 40%. On IsaacGym, BumbleBee also outperforms the comparison methods in all three metrics: success rate, MPJPE, and MPKPE.

2.Analysis of Clustering and the Role of Experts

The comparison results among No Expert Direct Training (General Init), Random Clustering Experts (Random), and BumbleBee show that their success rates on MuJoCo are 33.01%, 35.36%, and 66.84% respectively. The results indicate that reasonable clustering and expert learning are significantly superior to random segmentation or direct training of general models.

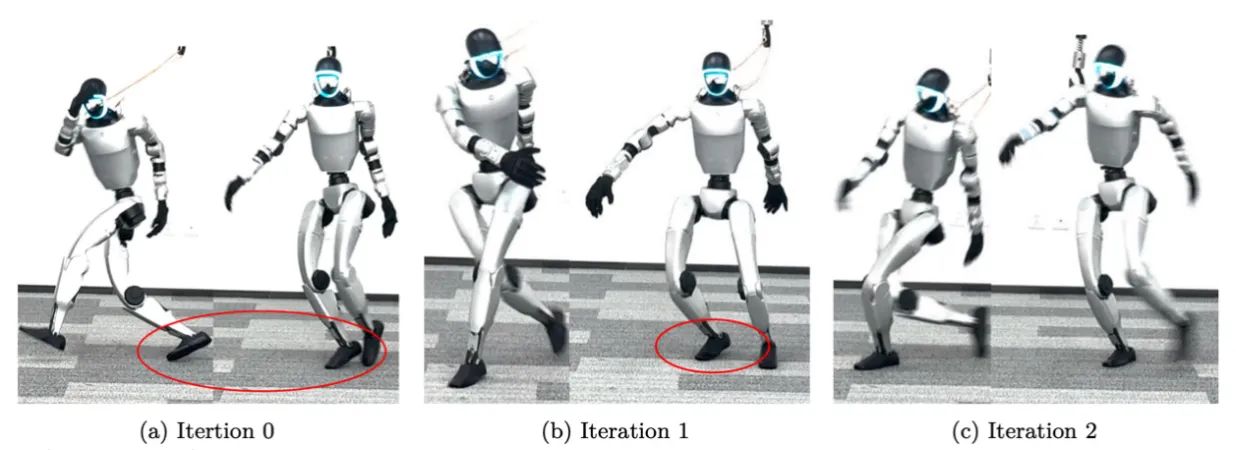

3.Real robot experiments

Iter 0 (not fine-tuned by the action increment model): The robot could not maintain stability, failed to land, and caused the system to crash.

Iteration 1: Stability has improved significantly, but there are still difficulties in lifting the feet and body tremors.

Iter 2: The robot can smoothly track the reference motion and maintain overall balance.

About BeingBeyond: Intelligence Without Boundaries