BeingBeyond's latest achievement: the first realization of human hand-robot tactile data migration, directly addressing industry pain points

Recently, the BeingBeyond team has proposed an innovative solution for human-robot tactile data transfer - UniTacHand. Through a two-stage framework of "morphological unification + feature alignment", it can build a bridge between human tactile sensation and dexterous hand tactile sensation with only 10 minutes of human-machine pairing data, realizing zero-shot skill transfer.

UniTacHand's Core Breakthrough: Bridging the Gap Between Human Touch and Dexterous Hand Touch with "Unified Representation"

In complex scenarios with visual occlusion and dense contact, tactile perception is the core for robots to achieve human-level dexterous manipulation. However, the scarcity of high-quality robot tactile data and the heterogeneity between human and robot hand morphologies and sensors have become two major challenges in the path from learning from human data to transferring strategies to real robots in the field of tactile sensing.

Currently, the mainstream solutions in the industry are categorized as follows:

Either it relies on massive real machine data, and this solution has extremely high requirements on the quantity and quality of data; or it trains strategies through methods such as reinforcement learning in simulation before deploying them on real machines, but this solution incurs huge time costs and is also limited by the sim-to-real gap; or it is constrained by the embodiment gap. Due to the ontological differences and gaps between human data and real machines, it is difficult to directly transfer to real-world robots. Even a few methods that attempt tactile transfer are only applicable to simple grippers and cannot be adapted to high-degree-of-freedom dexterous hands.

UniTacHand Thesis Cover

The UniTacHand solution by the BeingBeyond and Peking University team is based on a key insight: the operational logic of human hands and robotic dexterous hands is essentially the same. As long as a universal representation that can eliminate morphological differences while retaining the actual physical meaning of tactile sensation is found, direct transfer of skills can be achieved.

Based on this, the team innovatively used the 2D UV mapping of the MANO hand model as a unified representation carrier and combined it with a contrastive learning framework, effectively solving the two core problems of "morphological heterogeneity" and "feature misalignment", and realizing zero-shot transfer of tactile strategies from human hands to dexterous hands.

1. Uniform Form – MANO UV mapping bridges the gap between humans and machines

Different tactile sensor layouts and different hardware structures of dexterous hands from different brands lead to significant ontological differences in tactile information. UniTacHand reduces these two types of heterogeneous data to a unified format through the UV Map space obtained by standardizing the MANO hand model into 2D:

- 人类触觉数据:手动标注传感器区域与MANO模型表面顶点的对应关系,通过双线性插值将稀疏压力信号扩散为891维信息的UV图,保留了触觉信息的空间特征;

- Human tactile data: Manually annotate the correspondence between sensor regions and the surface vertices of the MANO model, and diffuse sparse pressure signals into 891-dimensional UV maps through bilinear interpolation, retaining the spatial characteristics of tactile information;

- Post-processing optimization: Gaussian smoothing and effective information masking are added to ensure that tactile signals are more reasonable and reduce redundant information.

This processing method not only alleviates the heterogeneity problem spatially, but also naturally embeds the spatial information of contact positions and positive pressure intensity, laying a foundation for subsequent migration.

2. Feature Alignment: Constructing a Shared Latent Space Between Humans and Machines Through Contrastive Learning

Mere uniformity in form is insufficient to eliminate differences in data distribution. When human hands and robotic hands perform the same object manipulation tasks, their hand postures are not necessarily the same. For example, when a human hand grasps an object, it tends to fit the entire object into the palm, whereas dexterous hands like Inspire often pinch the object with the finger parts, resulting in differences in the areas where tactile sensations are activated. UniTacHand has designed a targeted contrastive learning framework, enabling the tactile features of human hands and dexterous hands to share the same set of representations in the latent space.

In the part representation learning, the team proposed the use of a dual-encoder architecture: human hand tactile data and dexterous hand tactile data respectively pass through the dual encoding paths of "tactile input encoder + hand posture encoder" to ensure the collaborative representation of tactile and motion information. Through the triple loss functions of contrastive alignment loss (InfoNCE), reconstruction loss (MSE), and domain adversarial loss (BCE), a general latent space representation shared by the tactile features of human hands and dexterous hands is learned.

MANO 2D UV Mapping of Hand Model

The 10-minute pairing data can achieve zero-shot transfer.

Traditional transfer methods require millions of human or robot samples for large-scale pre-training, followed by fine-tuning on specific tasks to converge. The core advantage of UniTacHand lies in its "data efficiency" — it only needs 10 minutes (16k frames at 40Hz) of human-robot paired data to train the alignment model, and then combines low-cost human tactile data to achieve efficient transfer.

The paired dataset constructed by the team covers 50 categories of daily objects (such as fruits, beverage bottles, toys, etc.), including 688 operation trajectories, and covers various contact scenarios such as grasping, pressing, and moving. It is worth noting that even such a small-scale paired dataset can support the model to learn robust cross-domain mapping after being enhanced by physical inspiration. More importantly, the collection cost of human tactile data is much lower than that of robots - only ordinary pressure-sensitive gloves and motion capture equipment are needed for large-scale collection, which completely gets rid of the dependence on expensive real machine data.

UniTacHand Experimental effect

Real machine experiments: Pure human data enables the transfer of dexterous hand tactile skills, and mixed data surpasses pure real machine data

To verify the effectiveness of the method, the team conducted tests on 5 types of core tasks on the Inspire 6DoF dexterous hand + RealMan 6DoF robotic arm platform, and the results comprehensively surpassed the traditional baselines:

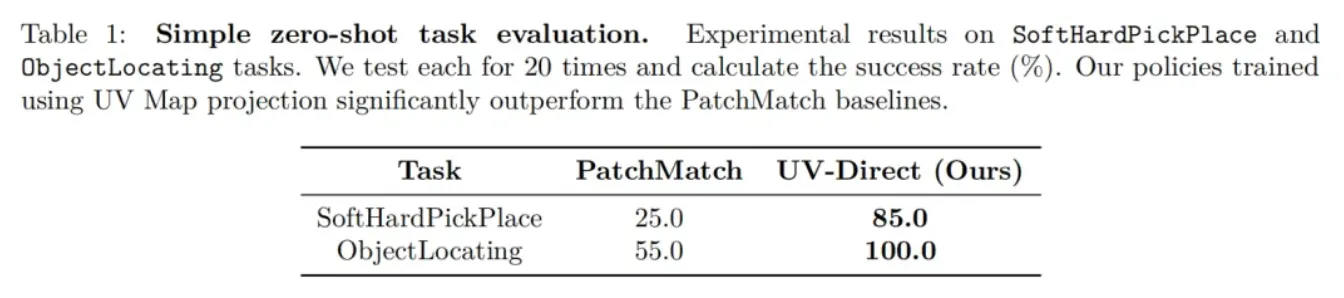

1. Unified UV Map for Zero-Shot Transfer: No Robot Data Required, Direct Deployment Works Immediately

- ObjectLocating(Object positioning): The robot determines the position of an object through tactile sensation, adjusts the position of the end of the robotic arm, and then performs the grasping. The success rate of UniTacHand is 100% (while the baseline PatchMatch is only 55%).

- SoftHardPickPlace (Soft and Hard Classification Placement): UniTacHand achieves an 85.0% success rate in distinguishing the hardness and softness of objects through touch and placing them into corresponding baskets (the PatchMatch baseline is only 25%).

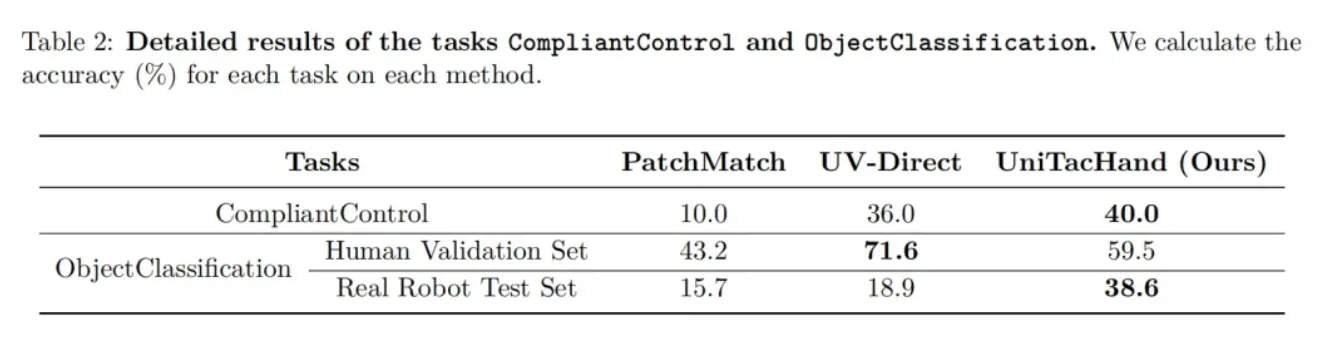

2. Learning representations from a small amount of paired data for zero-shot transfer

- CompliantControl: By applying the direction of push-pull force on the palm of the dexterous hand, the end of the robotic arm can move following the direction of the person applying the force. The accuracy rate of UniTacHand's movement direction is 40% (far exceeding PathMatch's 10.0%);

- ObjectClassification: Classifying 10 categories of unseen objects (including different shapes and materials), with an accuracy rate of 38.6% on the robot test set (far exceeding UV-Direct's 18.9%).

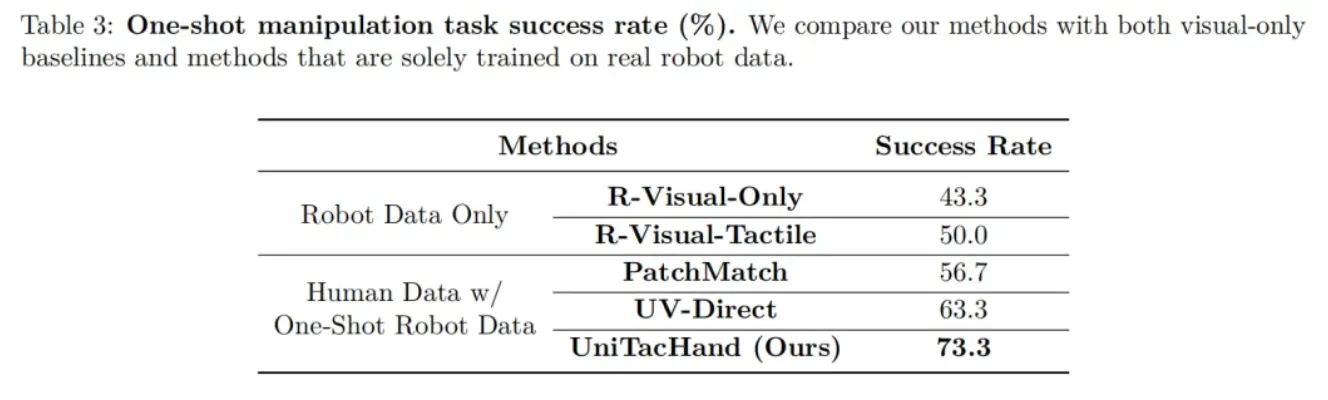

3. One-shot mixed training: human data + single real machine data, performance doubled

In the task of "visually confusing but tactilely distinguishable" (such as empty bottles vs. bottles filled with water), UniTacHand, trained with human data plus a single piece of real machine data, achieves a success rate of 73.3%, which far exceeds the methods trained with pure robot data (R-Visual-Only: 43.3%; R-Visual-Tactile: 50.0%). Moreover, it is 10 to 20 percentage points higher than baselines like PatchMatch and UV-Direct that use human data plus a single piece of real machine data.

As the first unified representation framework to achieve zero-shot tactile skill transfer from human hands to dexterous hands, this research not only addresses the core bottleneck of tactile transfer but also constructs an efficient path of "human data → unified representation → robot skills".

The team stated that in the future, they will further expand the scale of human tactile data collection, explore integrating the tactile modality into the Vision-Language-Action (VLA) foundation model, promote the evolution of robots from "vision-dominated" to "multi-modal collaboration", and enable dexterous manipulation to truly enter daily life.

About BeingBeyond: Intelligence Without Boundaries

BeingBeyond has pioneered the paradigm of training general embodied models using large-scale human data. Both the dexterous hand operation models (Being-H series) and the humanoid robot mobile operation models (Being-M series) boast industry-leading performance and can be deployed across different embodiments. Meanwhile, the world's largest first-person hand dataset and full-body posture dataset built by the team are becoming the core foundation for driving model iteration. BeingBeyond focuses on the research and development of general humanoid robot models, providing robot ontology manufacturers and customers in application scenarios with highly generalizable and deployable embodied models.